Hi, I'm Jonathan Wandera — A Data Architect, Designing Scalable Data Platforms, Warehouses & Analytics Systems.

I specialize in designing and building modern data platforms that power analytics, machine learning, and business intelligence at scale. My work focuses on building reliable data pipelines, scalable data warehouses, and well-modeled datasets that enable organizations to make confident, data-driven decisions.

With experience across the modern data stack, I design architectures that support large-scale ingestion, transformation, and analytics workloads. My work includes building batch and streaming pipelines, implementing dimensional and enterprise data models, and enabling self-service analytics across organizations.

I am particularly passionate about data platform architecture, data modeling strategy, and building systems that make high-quality data accessible to engineers, analysts, and machine learning teams. My focus is always on delivering measurable business impact through scalable and maintainable data systems.

“Transforming complex data ecosystems into reliable, scalable platforms that unlock real business value” is my motto

22+

Years of

Experience

250+

Data Models

Designed

50+

Data Pipelines

Built

999+

TB of Data

Processed

My Career

Experience

Staff Data Engineer / Information Management Specialist

U.S. Department of State — Uganda Mission

(2021 – Present)

Role

Lead data platform engineering initiatives supporting operational analytics and program monitoring for the U.S. President’s Emergency Plan for AIDS Relief (PEPFAR). Design scalable enterprise data platforms that integrate operational systems and enable data-driven decision making across mission leadership, program teams, and international partners.

Key Accomplishments

- Architected and deployed a self-service enterprise data lake integrating 10+ operational systems, enabling cross-organizational analytics through standardized ingestion pipelines and scalable data models.

- Reduced reporting latency by 60% across leadership dashboards by building automated ELT pipelines with modular transformations and orchestrated workflows.

- Engineered high-reliability data pipeline infrastructure with sandbox testing environments, automated data validation, and observability monitoring.

- Enabled analytics for $2B+ public health investments by consolidating operational, donor program, and logistics datasets into centralized analytics models.

- Led cross-team architecture initiatives across U.S. and international stakeholders to align data infrastructure with strategic planning and program monitoring requirements.

Enterprise Data Architect / Integrated Solutions Architect

U.S. Department of State — Uganda Mission

(2016 – 2021)

Role

Led enterprise architecture and modernization initiatives across mission operational systems. Consolidated fragmented data platforms into unified analytics environments supporting national public health programs, mission leadership reporting, and operational planning.

Key Accomplishments

- Designed Agile national COVID-19 reporting data warehouse integrating data from 1000+ clinics, national surveillance systems, and border monitoring platforms.

- Consolidated fragmented operational systems by designing centralized enterprise data architecture enabling mission-wide reporting.

- Implemented analytics data mart for OBMS (Overseas Business Management System) Motor Pool operations, improving transportation resource planning and operational visibility.

- Advised national health leadership on scalable data architecture and supervised migration of mission-critical reporting infrastructure.

- Modernized enterprise collaboration environments through deployment of Microsoft 365 and secure digital collaboration systems.

Senior Database Engineer / Data Warehouse Architect

U.S. Department of State — Uganda Mission

(2003 – 2016)

Role

Designed enterprise database architectures and large-scale data warehousing systems supporting operational intelligence, financial oversight, and program monitoring for major global health initiatives funded through the U.S. President’s Emergency Plan for AIDS Relief (PEPFAR).

Key Accomplishments

- Designed enterprise data warehouses integrating 50+ partner datasets to improve transparency and reporting accuracy for $2B+ global health funding programs.

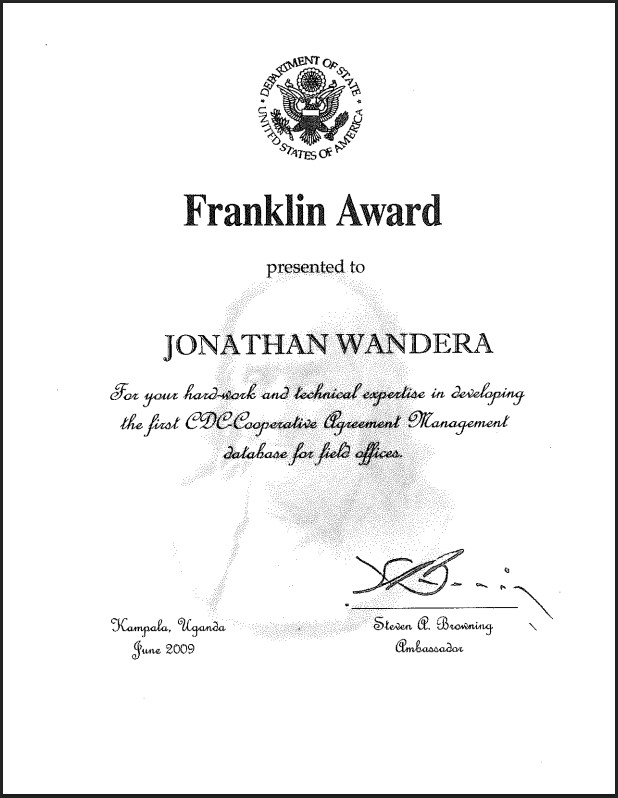

- Built cooperative agreement management reporting systems enabling leadership to track funding allocation and implementation across international partners.

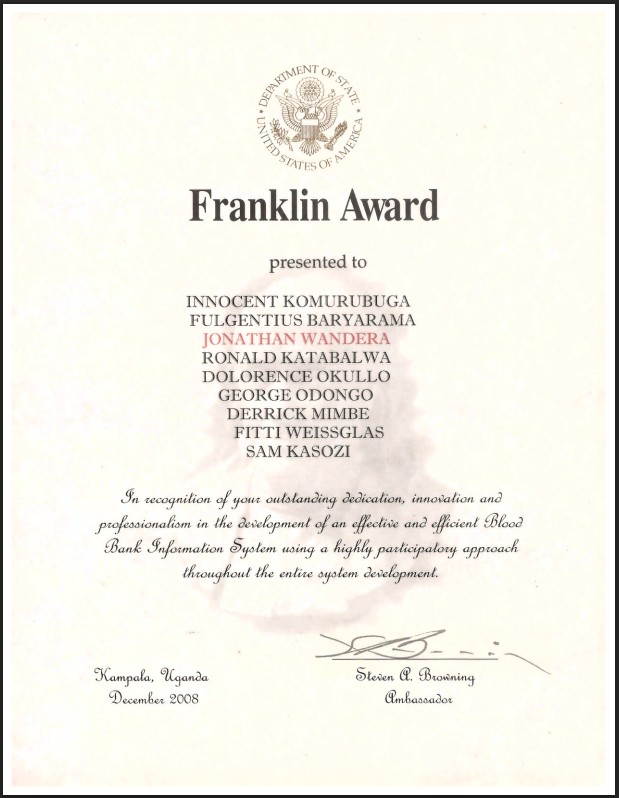

- Led development of a national blood bank information system supporting 25M+ patient records while managing a software development team.

- Developed project monitoring databases supporting 50+ health facility construction programs, reducing funding inefficiencies through structured reporting workflows.

- Introduced early enterprise analytics platforms enabling automated data consolidation and leadership dashboards for program performance monitoring.

Skills

Data Engineering Expertise

Data Platform Engineering

Designing and implementing modern data platforms that support analytics, machine learning, and operational workloads.

Data Modeling & Analytics Engineering

Designing scalable enterprise data models that support analytics, reporting, and machine learning workloads.

Business Event Data Modeling (BEAM)

Designing data models around core business events and operational activities to create analytics-ready datasets that accurately reflect how the business operates in real time.

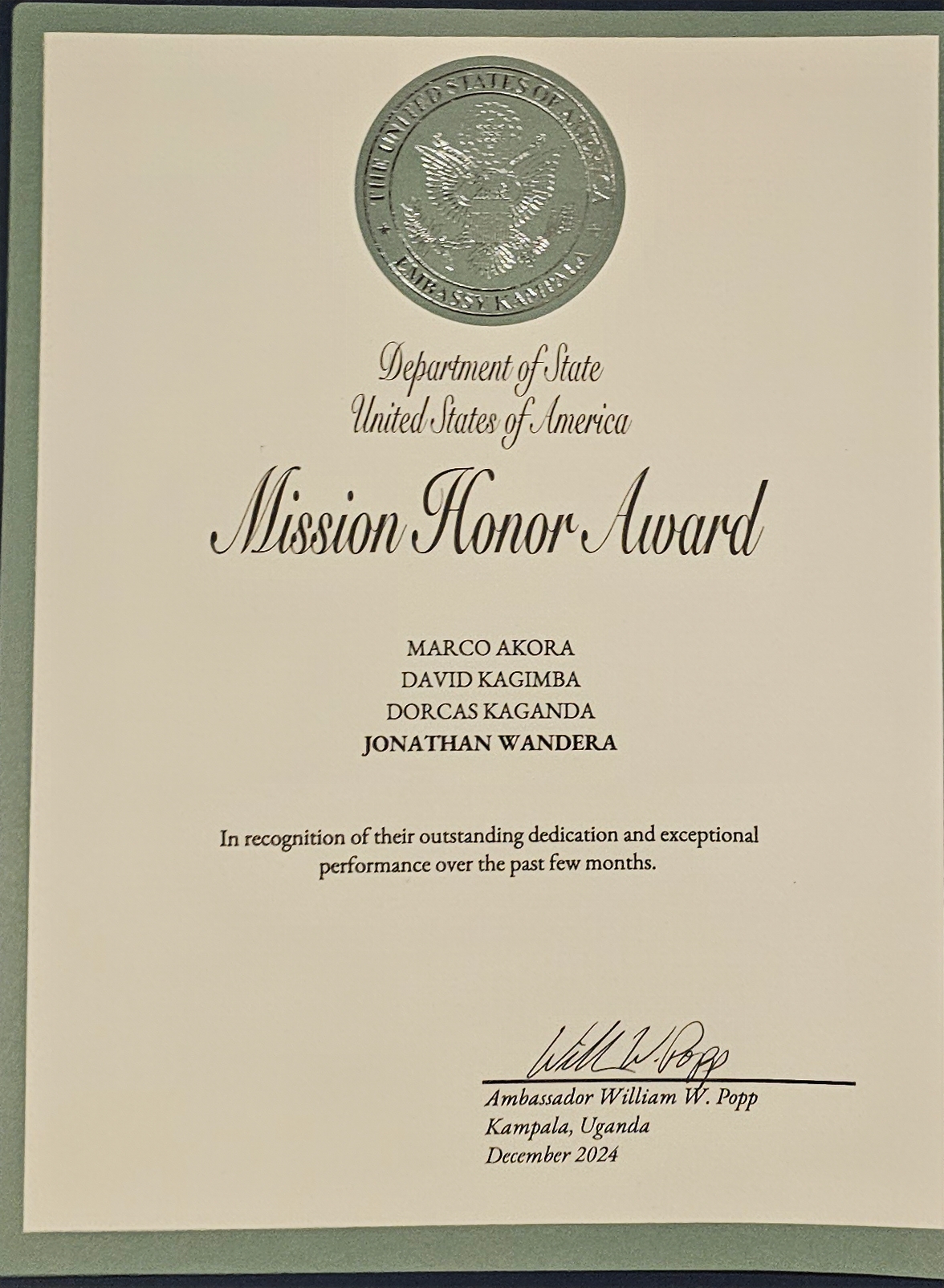

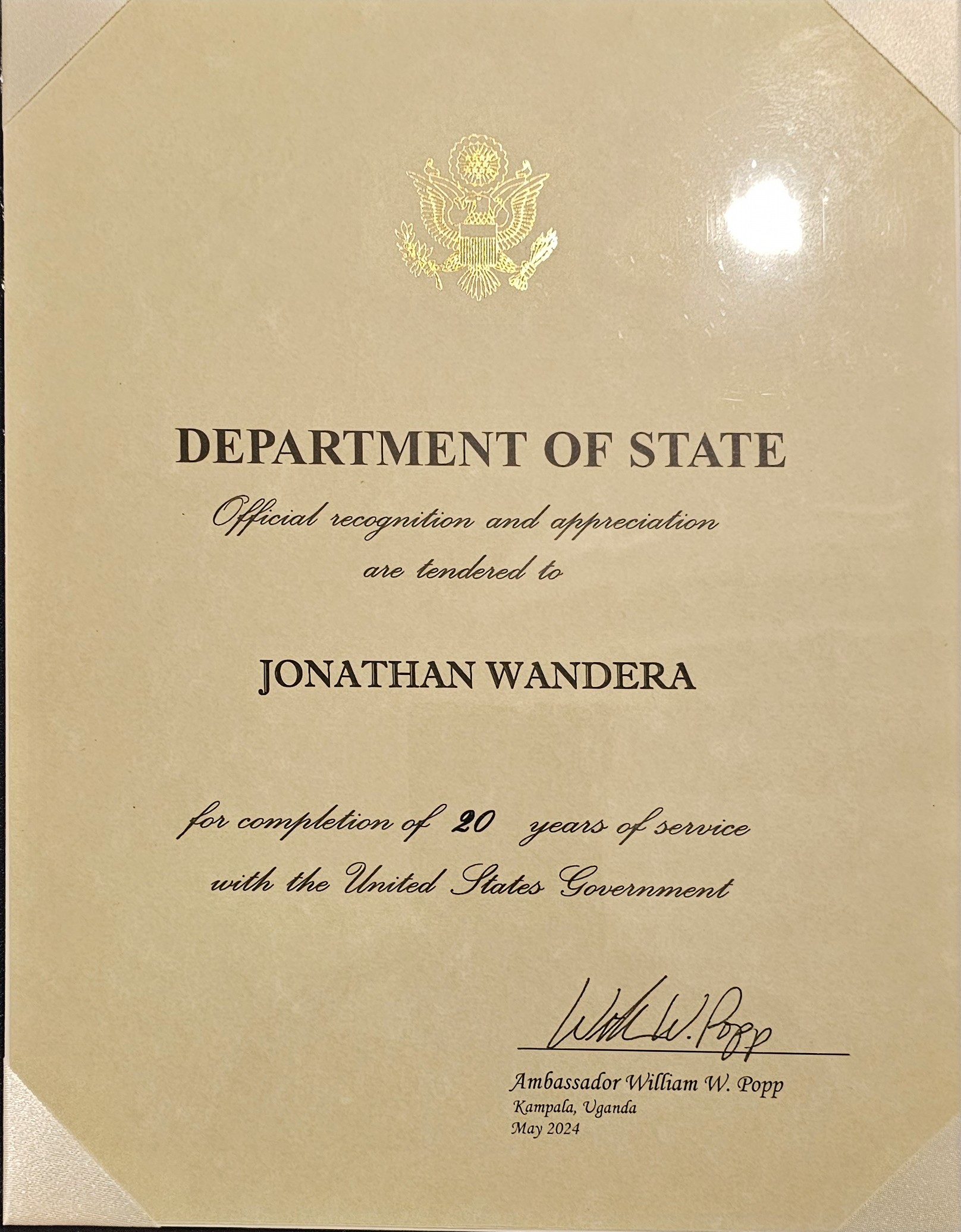

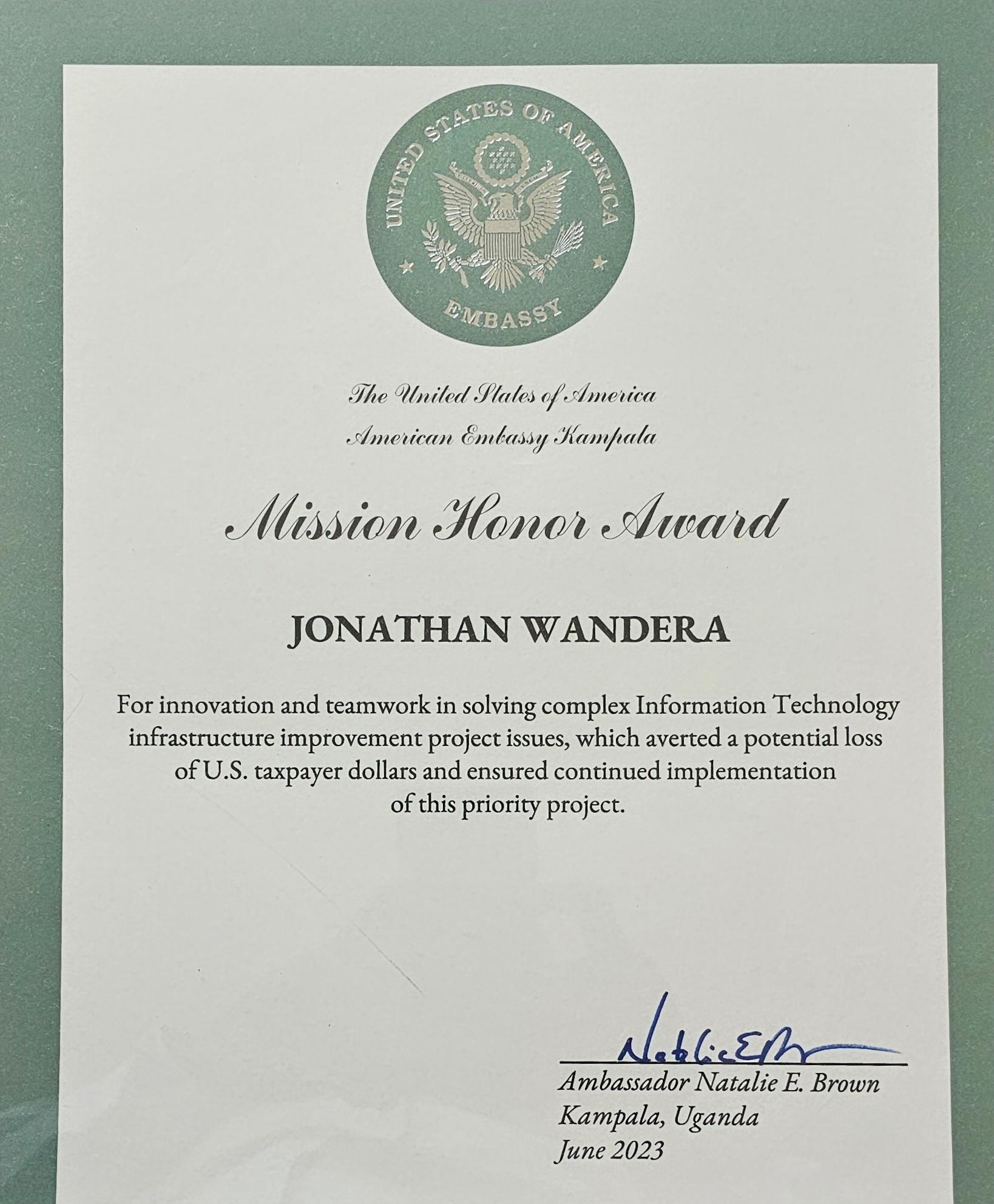

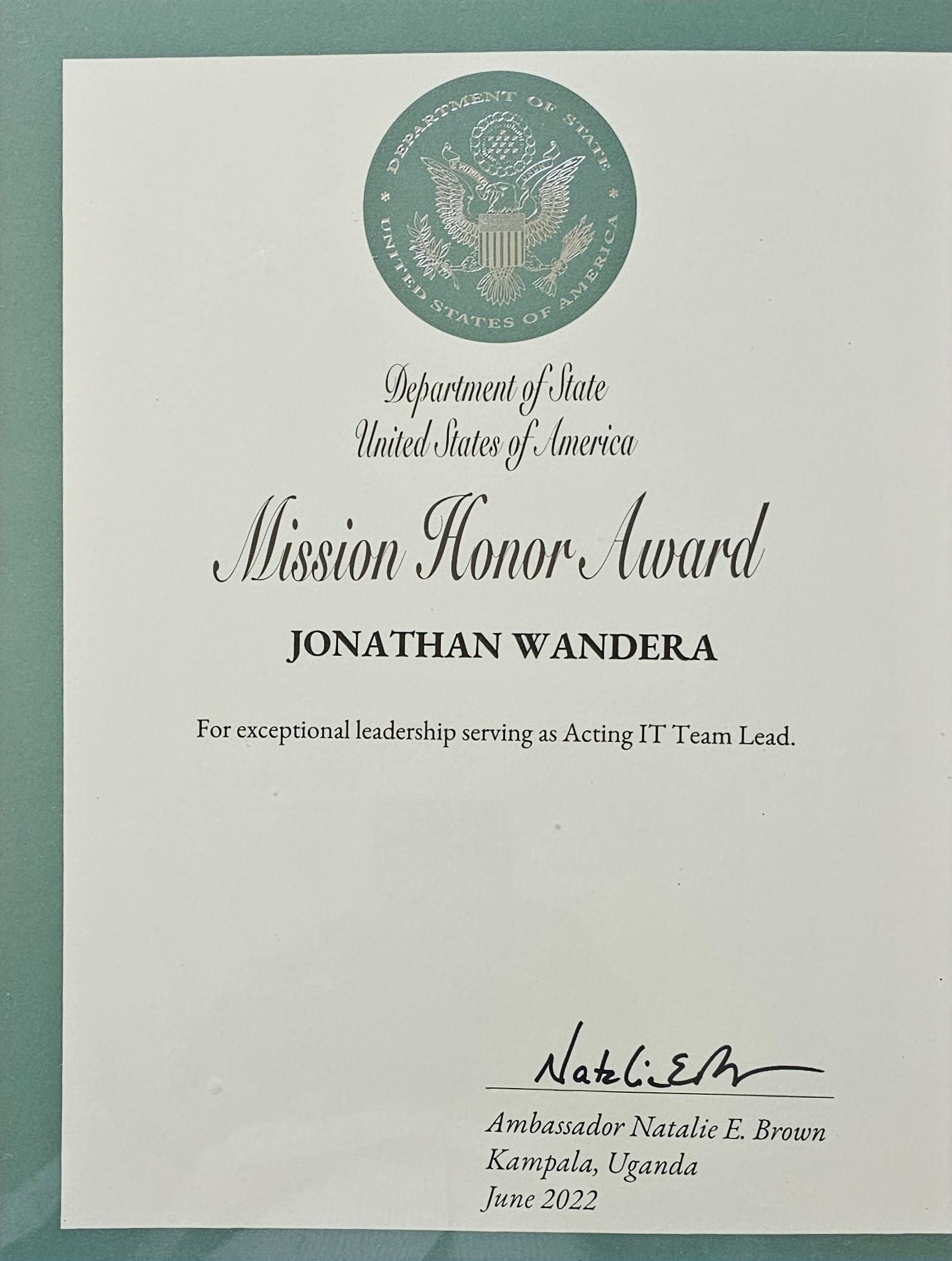

Awards

Achievements

Academic

Credentials

MSc Big Data

BSc (Statistics Major)

Notable Award

Citations

"In recognition for their outstanding dedication and exceptional performance over the past few months.”

“Official recognition and appreciation are tendered to Jonathan Wandera for completion of 20 years of service with the United States Government.”

“For innovation and teamwork in solving complex Information Technology infrastracture improvement project issues, which averted a potential loss of U.S. taxpayer dollars and ensured continued implementation of this priority project.”

“For exceptional leadership serving as Acting IT Team Lead.”

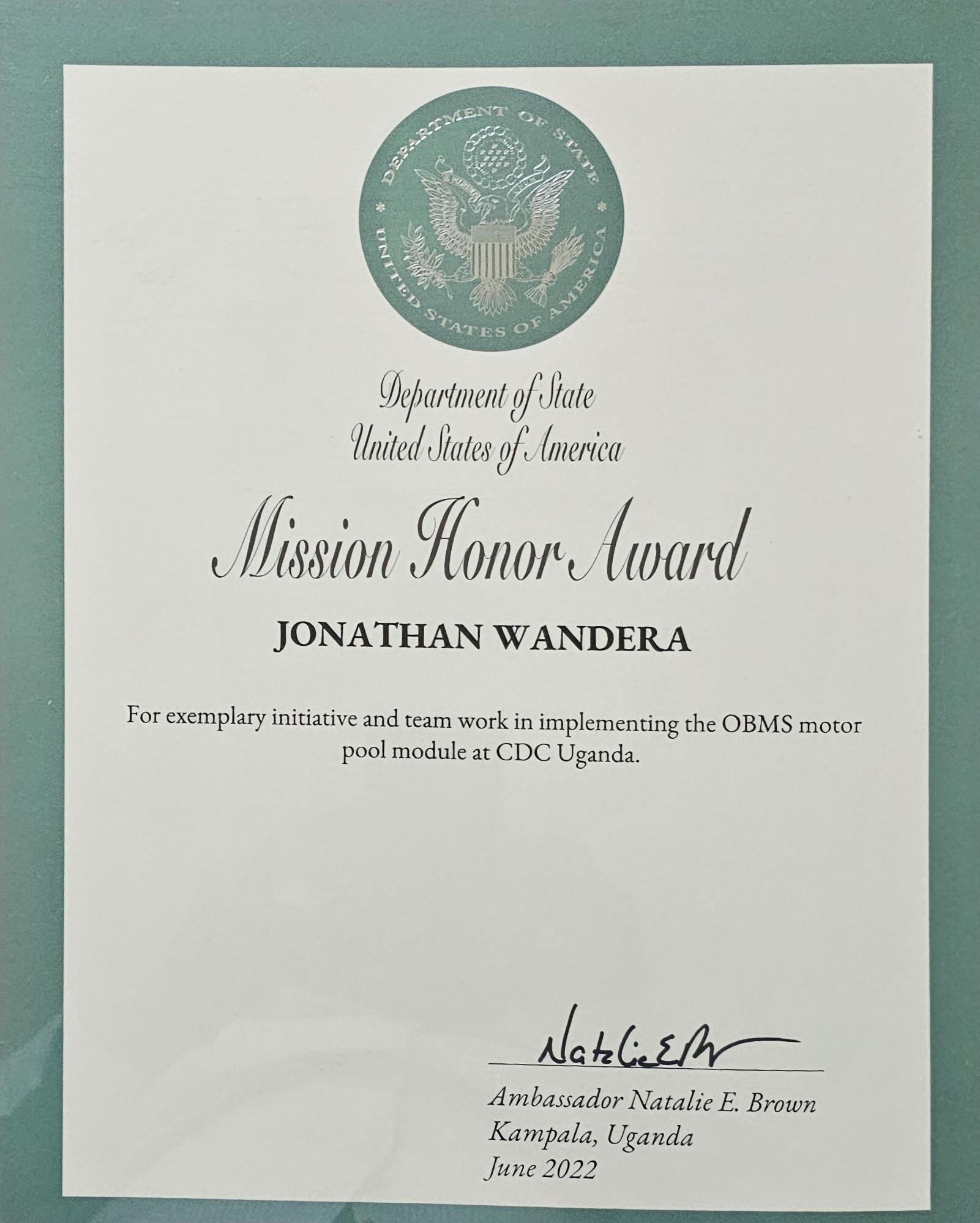

“For exemplary initiative and team work in implementing the OBMS (Overseas Business Management System) motor pool module at CDC (Centers for Disease Control) Uganda.”

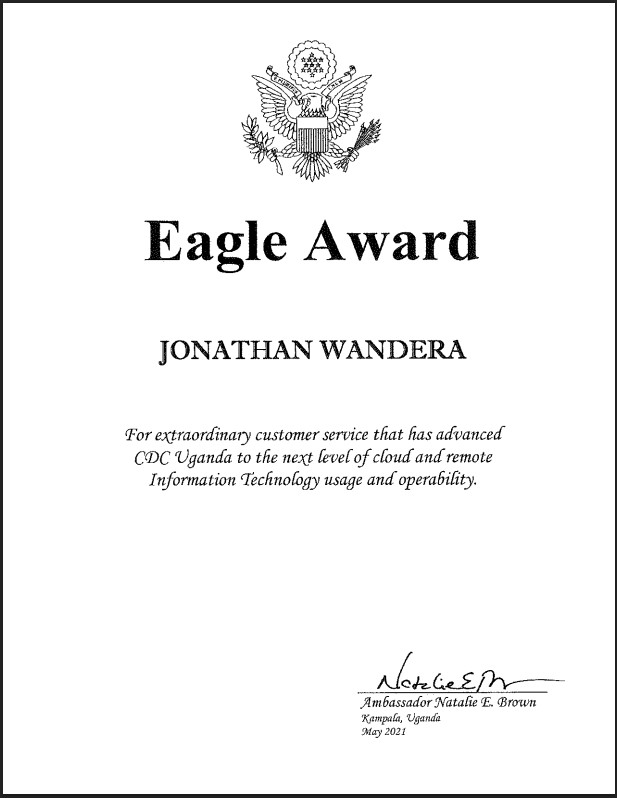

“For extraordinary customer service that has advanced CDC (Centers for Disease Control) Uganda to the next level of cloud and remote Information Technology usage and operability.”

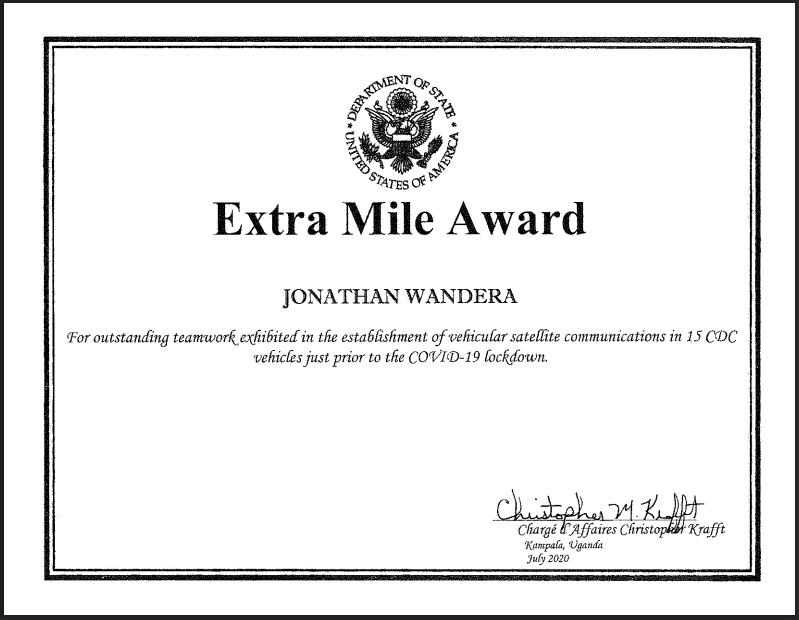

“For outstanding teamwork exhibited in the establishment of vehicular satellite communications in 15 CDC (Centers for Disease Control) vehicles just prior to the COVID-19 lockdown.”

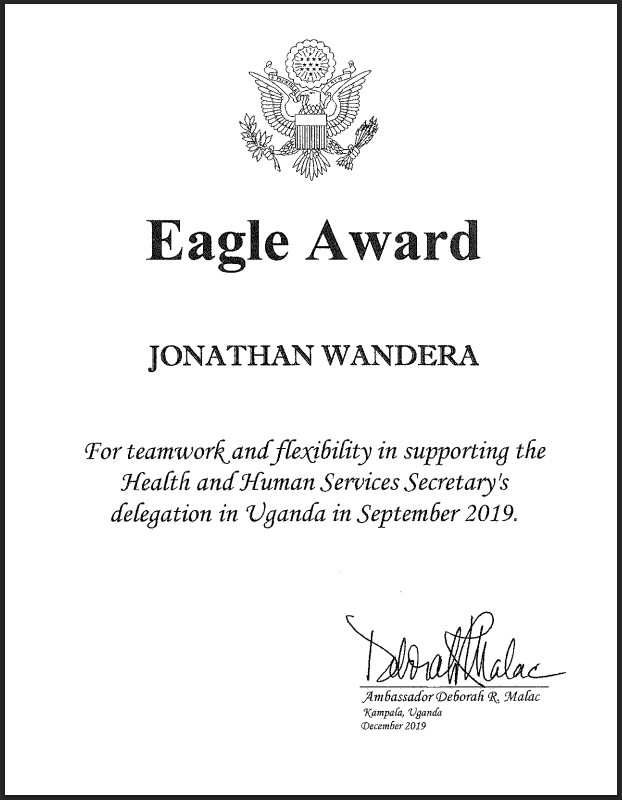

“For teamwork and flexibility in supporting the Health and Human Services Secretary's delegation in Uganda in September 2019.”

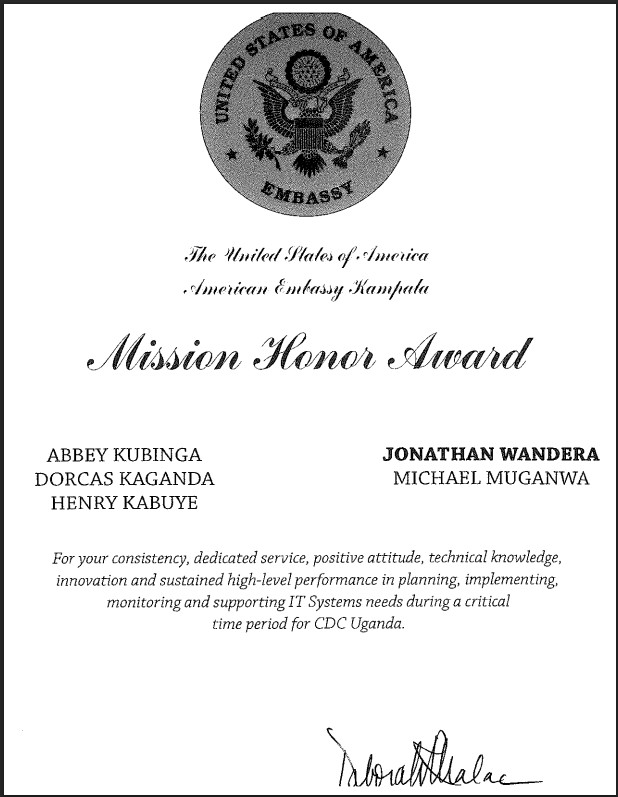

“For your consistency, dedicated service, possitive attitude, technical knowledge, innovation and sustaine high-level performance in planning, implementing, monitoring and supporting IT Systems needs during a critical time period for CDC Uganda.”

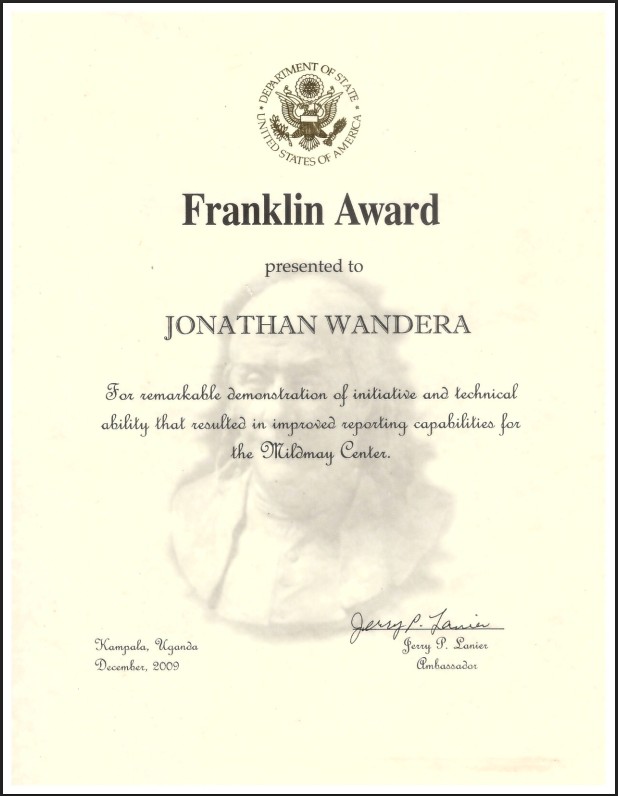

“For remarkable demonstration of initiative and technical ability that resulted in improved reporting capabilities for the Mildmay Center (a U.S. PEPFAR grantee).”

Have any Questions?